Source: http://www.3dnews.ru/821006/page-2.html

Hello everyone,

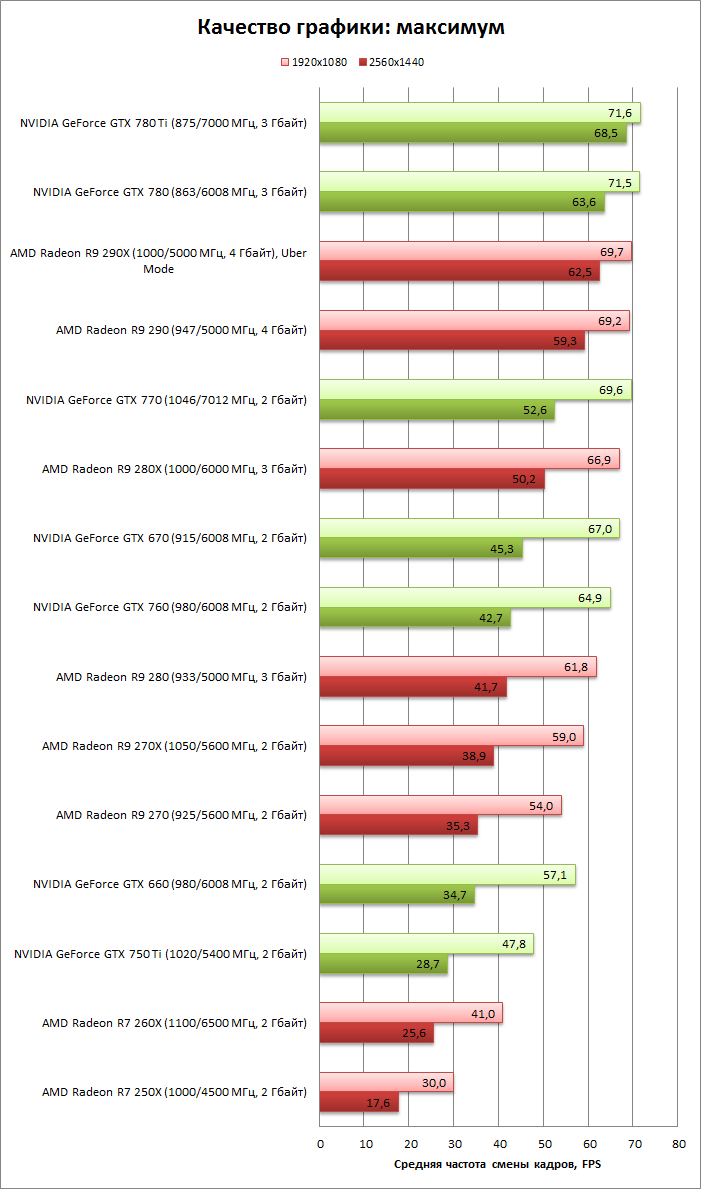

an interesting article appeared on the Russian portal 3dnews, regarding the GPU benchmarking for the World of Tanks maximum graphic settings. The tested configuration was:

CPU: Intel Core i7-3960X @ 4,6 Ghz (100×46)

Motherboard: ASUS P9X79 Pro

SSD: Intel SSD 520 240 GB

System: Windows 7 Ultimate X64 Service Pack 1

Drivers – AMD: AMD Catalyst 14.4 WHQL, Nvidia: 335.23 WHQL

They tested the average FPS. The tested resolution was 1920*1080 (lighter) and 2560*1440 (darker)

Since I can’t properly edit the picture:

МГц = Mhz

Гбайт = Gigabytes

And the result?

NVIDIA Geforce GTX 760 ;)

GTX660 and waiting for real Maxwell to upgrade :)

Same here. After seeing what 750Ti can do with just 75W and on 28nm. Cant wait !

I have two GTX 760 yet the game struggle to to maintain constant 60 fps

My reaction to your post, since i play on a HP laptop:

https://www.youtube.com/watch?v=rasZzenuYxI&feature=kp

Poor you :(

Doesn’t matter what GPU(s) you have. You need a single-core 10 GHz processor, SSD, max 1 GB of RAM and “russian playerbase” approved wooden casing.

Oh, and remember to buy your premium account for this year, you don’t want them to starve, now do you?

LOL…funny ‘cos it’s true .. .. this games runs on a single core and the HardDrive

The game doesnt support SLI or Crossfire (2x GPU)

Didn’t you know that sli isnt working properly in wot? Disable it, will go smoother

I have GTX 760 too. Terrible FPS with i7 4770.. 40-50

I have GTX 760 and i5-k edition, I play in window 1268×1024, foliage – off, decals – medium -> the game have 65 fps average .. I tried Decals high and had 45-50 fps .. after 9.0, because when there is a lot of crappy graphics effects one can see the situation.. I prefer lower graphics and good gameplay for better SA, than super Hi graphics as those kids want ..

yeah BTW my game run on SSD

was the i7 @ 4.6 used to try and remove any CUP bottle neck ?

pointless testing GFX cards on a CPU bound game really

they might have done same test at stock speeds as well to give a better picture of

the benefits of the CPU OC.

^^this.

“pointless testing GFX cards on a CPU bound game really

they might have done same test at stock speeds as well to give a better picture of

the benefits of the CPU OC.”

Agree 100%.

Benchmark this with AMD FX-8XXX processor and remember to get some tissues before you start, you’re going to cry a lot. Intel relatively well in this game but AMD… not so much.

While at it, May I present you, Himmelsdorf in Ultra settings with 1920×1080 stock CPU benchmarks (all credits to Warynsky in wot eu forums.): http://pclab.pl/zdjecia/artykuly/focus/cpu2013/def/wot_1920.png

I have the R9 270X, aren’t getting what they say, think I need a SSD too.

l have 270X too!! :]..l get about what they got, what is your CPU clock speed?, mine is 4.2Ghz, when l increased the CPU from 3.4 to 4.2 it made a big difference for me. mid 40~fps to 60~fps

Just set at what it came out of the box at. Might try clocking it to 4.2 then, thanks

It really is the CPU, doesn’t really matter what GP you’re using.

funny how they used eXtreme edition i7 CPU, yet the game can utilize one core only :D

if they used 10 years old intel celeron, water cooled, OCed @ 6GHz they would see 2x better results.

No they won’t, you’ve got the theory all wrong

Not exactly – the newer CPU would most likely still beat the Celeron @ 6GHz (or even at a higher frequency) thanks to being able to perform many more instructions per clock

A bit like comparing the single-core performance of an i7 vs AMD’s FX series…

Why are they wasting their time benchmarking modrn graphics cards? We know they can run on max sertting at high fps. But people like me who can only get their hands on a 10 year old pc, need work to be done so we can get more than the only just playable 15fps at minimum settigs(no smoke at all) on 1080 by 768 that i live with. I have a A7600gt 256mb graphics card (an agp card), now i like to see them benchmark that, if they care about us people who dont have $1500 laying around for a new pc. Wonder why i have terrible stats? I thaugt your tanks was suppost to take a while to react to your commands, apparently not acording to some :-P

P.s. can i realy oc my old p4 to something like 4.6ghz? I know 6ghz is an exaduration. Its a 3.0ghz at the moment, anyone know?

its cheaper to buy another used, better computer for 100-200$. For overclocking u need improved cooling system. I bought a rig that runs wot on high for this money. Screen was not included ofc

What you could do is checking what best possible pc modules you can pun in your computer (as in, you have a socket xxx so the bes possible choice for socket xxx is a pentium xxxx and so on), search for the best performing graphic card for AGP slot and buy as much RAM of the right type and best frequency and cl times as you can put in your rig (you probably use XP so dont bother to put in more than 3,5 Gb). All this stuff you need to search on ebay or similar sites, they will cost maybe 50 dollars together, so its the cheapest way to do. You cant do more.

You could build a much much better pc for about 700$. 10yr old pc is less than a calculator.

you could build one for about $400 if you were to use the old case, possibly the PSU, get used parts. I mean, getting a refurb computer for $100 and tossing in something like a 550 or 650 would be about $300-$500 depending on how much you spend on the refurb, and that would probably suit you.

I agree, if you enjoy PC games, you should upgrade your hardware. My friend just upgraded his for around 1000€ and he got like new motherboard, Intel i7 4770k and gtx 760 and corsair H105 and also new computer case, but every part he ordered was brand new and spanking. If you manage to find used parts that are in OK condition, and you honestly do not need higher than intel i5 atm.

I am also planning upgrade on my hardware this month and going to sell my Crosshair V Formula-Z motherboard and AMD FX-8150 cpu and going to get Intel i7 4770k also and a MB that fits i7 (Haswell socket). I am not expecting to get more than half from the CPU and MB than what I payed for them.

So what’s this a *STOMP* AMD cause their vid cards are always slower and lower quality than NVIDIA? XD

Ohh lol…

Such idiot. Meny gut trol

Troll or not;

I agree. Radeon cards ARE worse in gaming sector. Cheap Radeon cards > cheap Nvidias, but if you are looking for expensive gaming system Nvidia is the only answer.

huh!? you sure you’re not just wrong

let’s look at the top of the chart, shall we? 780Ti – 630$ while 290X – 425$ (prices from NewEgg.com)

let’s say you want a dual config: 1260$ vs 850$, in that difference you can afford a fucking 3rd 290X – fucking amazing

no! you should buy the most expensive thing, not because they’re better, but because you deserve it!

Yeah, no.

GTX780Ti is a lot better than 290X. 290X can be compared to non-titan version – GTX780 which is often cheaper and performs better in most games.

With your logic – why buy R290X when you can have 3 R260X.

AMD is crap and times when Radeons were better than Nvidia in top sector are gone. Face it finally. AMD is losing market share, Nvidia is growing (Radeons still control quite a lot because they are often used in laptops/shit PCs).

Check yourself before you shrek yourself.

If you could say things that are true, that could be great and I won’t need to write this post.

How can you say that Radeons are crap? Are they not performing? There some games where Nvidia wins and some where AMD wins. They are about equal. Can you provide info on how is AMD share shrinking ?

I am here, for you.

1. Top shelf Radeons are more expensive and less efficient than Nvidia (fact)

2. Majority of game companies prefer cooperation with Nvidia (my observation)

3. Google “nvidia market share”. Currently AMD market share is falling down while Nvidia is steadily raising. Intel is leading (tons of integrated GPUs in laptops or cheap desktops), AMD behind it (very good cards for non-advanced users) and then theres Nvidia. If i remember good AMD lost 10% market share to Nvidia and their brilliant 700 series.

NViDIA = AMD in same price categories.

SO yeah, its BS what you say

Only BS is that what you wrote fanboi.

I am with a Gtx 760 moron.

You are a very amusing specimen

I think they guys responsible for Watch dogs being (un)optimized lent WG a hand to their (un)optimization department. :P

Jokes aside, I can totaly agree with the above comments. It’s kinda pointles to test GPU’s on a CPU heavy game. It’s the same as Planetside 2 pretty much, the game runs almost entirely on the CPU. So WoT and other games alike that use this or a simillar engine in nature are mostly gonna be CPU oriented, the GPU is there to just show the picture but not calculate, render, etc. everything else of the game.

My understanding of the shit efficiency of watchdogs is because it was built up to work with consoles then ported poorly to PCs.

Tell me a console game that has not suffered in one way or other when ported to PCs… At least most of ported game are not like Dark Souls 1, remember that disaster? One guy spent hes free time to mod the DS1 to be playable on PC and even gave it improved graphics, he is a hero in my book and should have been HIRED by DS dev team.

I’m definitely bottom of the chart!

I usually get 20-35fps – I am total laptop warrior!

Huge detailed WoT GPU test (in Czech) with many graphs:

http://www.cnews.cz/testy/world-tanks-90-test-grafickych-karet-nejen/strana/0/3

This is by far a better review, still numbers show a terrible graphic engine unable to take advantage of the best gpus on the market able to move that graphic monster named watchdogs =_=

I’m referring to the first review, not the better one reported by wotcarramba66!

I find oddly enough that they didn’t use the latest 337.88 drives from Nvidia, ’cause they are claimed to give a nice performance boost all around.

Another odd thing is that they didn’t make available the replay they used, to let us do our own testing on their own conditions… and they didn’t specify what map (just a generic “urban map”).

Anyway, for those who protest about the use of such a big and powerfull cpu in the rewiev… well guys if you are going to compare GPUs, you try to reduce the “CPU limit” factor, that’s it. It’s a cpu scalability test.

One thing for sure, WOT is terrible, some high end videocards like 780 ti are able to push Watchdogs @ 1900×1080 at ultra details, which is like 10000 times better than wot in graphic and moves 10 times more things on screen.

Big World Engine is really a joke, developers of Armored Warfare by getting the license to cry engine made the best move ever.

In my own opinion the best engine for WOT could have been the latest frostbite, the same of battlefield 4.

My 2 cents.

P.S. Core i7 @ 4.3 GHz, geforce gtx 780 ti @ 1100 mhz… and I still get even 40 fps on some circumstances… ‘enough said.

Watch Dogs…

Is, when it comes down to tech, a piece of shit.

Watch dogs is very bugged, but still shows up a graphic which is far ahead wot, so looking at fps it’s really sad what big world engine does.

Try adding hbao+ to WOT, try adding all the effects watch dogs engine implenets and you’ll see WOT fps fall to… 20?

Mate. Games from 2007 and 2008 make fun of Watch Dogs when it comes down to graphics.

What the fuck are you talking about? Every goddamn post you made has no fucking sense at all. Stop blabbering you fucking moron. Name one fucking game from that period that has better graphics than Watch Dogs. And no, i’m not watch dogs fanoboi nor do i have the PC good enough to run it, but your fanboism towards WG is immense.

Now now, mister hater.

I see you disagree with me here, does not mean all my posts will be invalid even for you :) . Though I forgot… one of those Soviet Bias is real people… yeah…

Anyways here are two game names: Crysis. STALKER Clear Sky

If you want include Far Cry 2 too.

Crysis I guess needs no introduction. Not only is it prettier but it runs better and has much better AI then WD.

STALKER Clear Sky (2008):

https://www.youtube.com/watch?v=YAYLHAPPkvw&index=4&list=PLD2B82E405CF9650C

https://www.youtube.com/watch?v=gkLR2tYRubw&index=3&list=PLD2B82E405CF9650C

It also has FAR (as in a few order of magnitude) better AI then Watch Dogs. Or any other game for that matter.

SO now I gave you not 1. Not 2. But 3 games that either look better or are just more advanced then WD.

And I am saying this as a Watch Dogs fan. I play the game on both a 5 YEAR OLD PC (System requirements for Watch Dogs are a lie mate :) ) and a newer rig. And I like it.

Not a WG fanboy. Not a hater like you though :)

I’m pretty sure the system requirements are a lie considering they can be run on the consoles. those things are utter crap, I mean they came out in 2012, they just didn’t go on sale until this year.

Yes they are crap. I run it on medium on a BELOW the requirements machine :D :D

STILL looks bad though :( . Well not bad… not good enough.

This graph is all about cpu limitation. Differences are so pour across the GPUs that this is obvious this game has a shitty CPU management.

Watch cpu usage of any other game, you’ll notice that you’ll barely hit 20%. It’s from first generation of geforce and radeon that developers are moving towards gpu programming, leaving to cpu very little job.

I still see people thinking that multicore support will somewhat make a miracle… here the problem is that big world engine is a pile of shit growing heavier each release in order to be “visually” competitive.

Check WarThunder cpu usage while you are playing, check his graphic and his framerates…. it’s another example.

Yeah that is what i was saying a game with 80% CPU usage on modern CPU is a very poorly programmed game.

To be fair, Ground Forces is unoptimized compared to Air.

I get 20 fps on “Movie” there, but 30-50 at 1650×1090 on WoT :P…

I get 120-200 fps on ground forces, 40-90 on wot °_°

everything set to max in both games

Good for you :) .

open world is a shit engine made more worse by WG.

Even so it’s still the job of the CPU to push instructions to the GPU so there’s is a theoretical possibility that the CPU will limit the frame rate.

I would much rather have seen the results across a range of CPU’s and a fixed GPU.

You can do that on your own rig by downclocking your cpu

Nope.

i can’t emulate AMD on my Intel or vice versea

same with the underlying changes made between generations. especially with the intel chips. There’s a ton being changed down there, and part of that is why they need new chipsets every other generation. AMD has been working on the same thing for several years, they’ve just been packing it tighter and tighter while being as efficient as possible. that’s why they still have the same chipset as 5 years ago.

I have the r9 270 2gb with an AMD A8 6500 CPU and do get between 45-55 fps but only when I turns loads of stuff off. But that said in 9.1 CT 1 I was able to turn loads back on a keep the fps at that level. Not tested 9.1 CT 2 yet.

my housemate has a R9 270X, with Intel I5 E4670K, 8GB RAM and an SSD. He just has WoT with VSync turned on at all times because he gets 120 FPS easy if he doesn’t :p.

Needless to say, my 4 year old HP laptop doesn’t have quite the same performance characteristics, although I can eke out 50fps with careful management and graphics settings. Unless it’s Ruinberg on Fire, where I get about 12.

Got a 770, so no problems here at any setting.

What CPU and at what speed? as has been widly noted in the comments, the CPU and read/write capability of your storage are a lot more important than your GPU.

With my 660 TI and 4770S, this chart is pretty much correct.

i7 920 @ 3.7 GHz

GTX 780 stock

14 GB ram

I’m usually between 60-40 FPS, although perfectly playable at 40 FPS I’d like it if was more consistently at around 60.

Edit: Oh yeah and I’m running on a normal 7200 rpm HDD, my SDD is too crammed.

My GTX 680 runs the game on max settings, smooth as silk. FPS ranges from 40 to 60.