Hello everyone,

first, many thanks to world_of_cactus for finding this very interesting video. In it, Maksim Baryshnikov is explaining, how the server architecture works during a lecture in Minsk on 13.12.2014 (during the Wargaming Developer Contest event in Minsk). And it’s… interesting. I wish this was in English, but it is not and the guy speaks TERRIBLY (hard to understand), but I’ll do my best to bring you the info from it. So, here’s the video.

The reason I think this is worth a deep-dive even for non-developers is that real-time multiplayer infrastructure is one of those problem spaces where the same engineering tradeoffs keep showing up in totally different industries — game studios, live-streaming services, trading platforms, even the people running an online casino at scale all wrestle with the same questions about regional clusters, latency, and failover. Wargaming has been solving these things at MMO scale for years, so even a rough translation of Baryshnikov’s lecture is more useful than it looks at first.

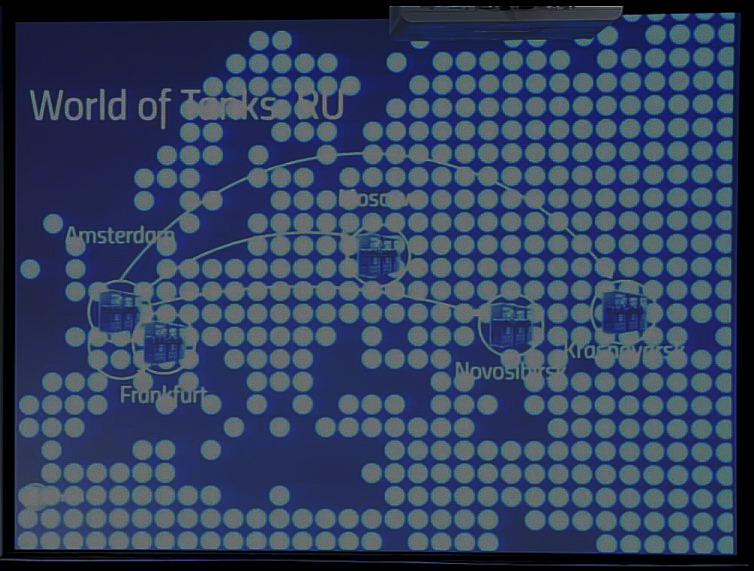

There are various servers within the cluster – (for EU it’s Amsterdam and Frankfurt). They are tied within the cluster. For some reason, RU cluster has two servers in Europe (one in Amsterdam, one in Frankfurt) and they are tied together as such:

Amsterdam has two datacenters with cca 40 servers, Franfurt has 70 servers. To compare, Moscow has 5 datacenters with 250+ servers (Novosibirsk has 80 servers, Krasnoyarsk has 70 servers).

Oddly enough, the game is distributed – for Russians, the login server and garage is in Amsterdam, while the game server is on separate location. Each datacenter works as a cluster by itself. It’s problematic to access various spots at the same time. Here he gets technical, but the general meaning I understood (okay, I always say this is not my thing but I know some of the basics) is that the lower functions of the clusters are not synchronized (asynchronous, distributed), as when considering that login server for Krasnoyarsk is in Amsterdam, the distance is such that latency becomes an issue, that’s why asynchronicity.

A battle can run on multiple nodes (server machines in the data center), one half of players is on one and the second one on the other (this is not an ideal case, but it’s possible). At this point he gets really technical, but basically, he states that probably the most important feature of the Wargaming-developed system is its ability to work with desynchronization (reliability). In order to assure swift access to databases, there are limits to working with them, such as for example each database has to be loaded to memory – no disk accessing when working with it. There are more limitations, but I just can’t understand exactly how this works (sorry, if there’s someone from the IT crowd who can speak Russian watching this, perhaps he could explain), but the result was that with the amount of database transactions per day, Wargaming now has three really bad/strange cases caused by database data inconsistency per day. These include an example, where a player was seeing himself as not in clan while the clan saw him still as a member, this state was caused by series of extremely low probability events, that however do happen since the amount of data transactions on WG server is so high.

More than 50 components of World of Tanks are handled outside the specific game infractructure, amongst them are the registration, SSO, game data access, clans, CW, portals, forums, shops and others. These components talk to each other using API. The fact that the entire system is split into components is advantageous for Wargaming, as it allows them to develop and test each component separately.

The disadvantage of such a distributed system is that for example your clan status is handled by clan service component and the game service has to “request” it. It’s thus possible for various components to fall out of sync. It’s not possible to sync everything in real time (every time either), WG had to develop a system where the data was actualized for all the components that don’t use them from the data master component, so you don’t get desyncs. Some components however can get actualized later than the more important ones, that’s why in a game you can see yourself in a clan (if you just entered one) while the portal gets actualized later via API. In any case, each components gets rigorously tested, even for really strange cases (some other components stopping working etc.).

I haven’t bothered to read because I’m pretty sure those servers are 2 monkeys driving bicycles in a cardboard box…

+1 for that comment :D

Hamsters! They are hamsters! Monkeys require too many bananas to maintain low latency. People, please educate yourselves.

Hamsters are too expensive and they also chew on the cables. These days they use ants, but they have a containment problem and some escape filling the entire system with bugs.

I can’t stop laughing !! XD

You could be right. Quite some time has passed since the last time I went there. Still, despite their high cost, I do believe hamsters are a viable alternative to ant servers. Just my 2 cents…

You forgot to mention that the chains have fallen off the bicycles

Monkeys are working as intended.

Are you sure? Aren’t that donkeys?

So this explain why my red control is perma on with ping 50 and below?

I understand the need for component distribution but why the geographical dispersion, specially if it creates latency issues. Are they trying to centralize everything while the game battle servers are the ones closer to the intended market?

Most likely the load on the login servers and garage servers parts arent high enough to justify more than one server for em. Especially as those are mainly components where you wont really realize if its 20ms or 200ms ping to em (For example garage chat: if you get the message right now or “right now” doesnt matter, where as ingame those small time frames can make a really big difference)

Didnt understand everything, but I guess it means that their servers are better than what community thinks because of all lags and crashes going on them.

I guess it’s their way to say “EU1 will not die another time”.

This is what happens during servers meltdown.

https://www.youtube.com/watch?v=OppZ0Aayd88

Hahahha, that’s funny xD

And I think every sudden lost of aim, like aiming, shake out of the blue and aiming circle spread is just because player being moved from one node to another.

I doubt they move you between nodes throughout actual battle, that’d make no sense.

They work like this :D

http://siemkakto.pl/wp-content/uploads/2014/12/1418322827_yu1kvbx7yri.jpg

lol!

Although WG would point out that you can’t make vodka with Steam or EA servers, which is a huge disadvantage (from Eastern European point of view).

My take on that:

- Where latency and ping is pretty much irrelevant (e.g. garage), centralise to a single location, which allows better server failover capability as it’s not distributed. Additionally, helps with costs as they specifically mentioned the need to load what are likely to be BIG DBs into ram. As such, you wouldn’t want a lot of those all over the place.

– The full architecture of the system is clearly pretty big, as logical elements (e.g. payment system and login) have dedicated servers

– Game servers are linked to from the garage servers and a localised to the users to improve ping rates

– Not sure I believe the comments about the number of servers. That just feels wrong. 40 servers to cover half the EU population? I wouldn’t be surprised if it was more than 40 just to run the various ancillary services such as login, garage and the like, but then with a requirement for thousands of virtual servers to actually run the games.

Assuming those numbers refer to the physical servers, they won’t all be identical so you still can’t quite compare regions based on how many they have catering them.

“How Wargaming Servers Work ? ”

They don’t. End of story.

I tried to watch with translated captions, and I got this “and then the tank horse caught bob”

Yeah, I ‘ve noticed at some point the dude completly lost it and started talking about bestiality stuff… which brings us back to the first comments about monkeys, hamsters and ants…

Very interesting, part of my studies include stuff like this! Thank you for posting this, SS!

Using database transactions *is* the industry standard to guarantee data consistency. Here, WG says that they have high loads, so that’s their excuse for not employing database transactions, and instead relying on faster but less robust methods. As the result, they save on the infrastructure, but the players have to pay with their gaming experience.

Anyone having lag problems today?

I have perfect connection. But the game is unplayable on both EU servers. WTF?

Interesting read. I’d love to hear about the finer details on how they handle the asynchronous stuff. Also, anyone who thinks their servers are bad should try playing literally any other game online. The servers are very solid compared to the clusterfuck that is EU West for League of Legends.